A cluster network is a type of computer network in which all nodes communicate with each other through a central node. The central node typically does not have a dedicated connection to the rest of the network, but it has a connection to every node in the cluster.

Cluster networks are often used for distributed computing, as they can be very efficient at handling large volumes of traffic and provide fault tolerance if one or more nodes fail. Cluster networks are also popular among research institutions that need to share data across multiple laboratories because they provide an ideal way for researchers in different locations to work together on projects without requiring expensive hardware connections between labs.

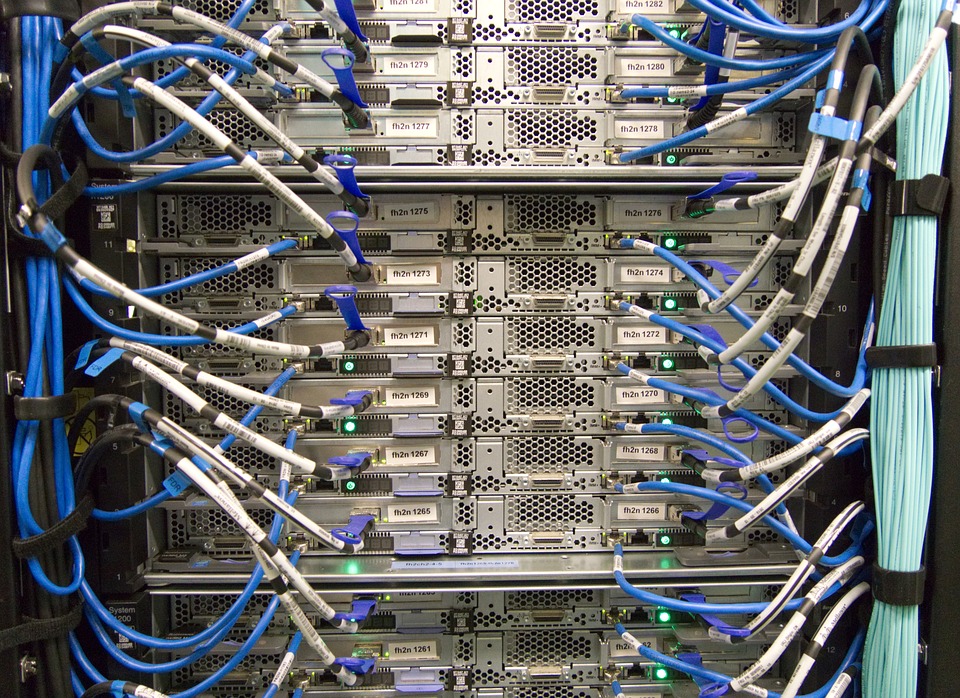

A cluster-network system could consist of many thousands or even millions of individual computers linked into clusters that span continents. Each of these nodes has access to all the information that is on any other node in the system, and that information is always up-to-date. All cluster nodes operate simultaneously; users can query any data they wish, and receive an immediate response.

A network like this would be extremely expensive to set up with physical hardware connections between all computers. However, software systems exist for creating virtual networks across existing commodity Internet connections without requiring special hardware or dedicated support staff. The administrators of such a network could constantly monitor its status using centralized tools designed for automatic discovery and auto-configuration (e.g., JXTA).

The cost savings come from scaling down one machine into many machines that are far less powerful than the ones that the network would otherwise require. The savings in equipment cost can be invested to provide more computing power or storage capability. Another benefit is increased redundancy, so the cluster will continue to perform even if nodes go offline for extended periods of time.

A cluster network is typically composed of multiple client machines working together seamlessly so that each client machine only needs to address a single system via an IP address. This provides efficient support for increasing loads since individual computers are limited by hardware capabilities, but several operating systems can share access through virtualization technology without interfering with each other’s functionality.

For example, there could be one central device controlling all file transfers between clients in order to distribute outbound traffic across available connections while putting incoming data on demand from any of the available connections to other cluster nodes. All users would access this device for access to shared files and directories without needing any direct IP addresses because their own computers simply send packets directly to and from one another.

Fault tolerance can be improved by using a single server accompanied by several backup servers, which all store the same data and whose main purpose is to provide redundancy in case of failure. If the primary server goes down, the backups will take over so that no information is lost. There might also be additional redundant servers around so that if more than one server fails at once, it isn’t disastrous, but rather merely inconvenient compared to having an unbroken service across all components of the network architecture.