In the rapidly evolving landscape of AI-driven graphics, Meta’s AI4AnimationPy stands out as a specialized, open-source framework designed to unify character animation and neural network training within a single Python environment.

Source: https://github.com/facebookresearch/ai4animationpy

Originally rooted in the widely known AI4Animation project for Unity, this Python-native evolution removes the heavy dependency on game engines, allowing researchers and developers to iterate on motion models without leaving the PyTorch ecosystem.

The Architecture: “Game Engine” in Pure Python

What makes AI4AnimationPy unique is its Entity-Component-System (ECS) architecture—a design pattern typically reserved for high-performance game engines like Unity or Unreal. By implementing this in Python, the framework provides:

- Modular Lifecycle Management: Objects (Entities) are defined by data (Components) and manipulated by logic (Systems), making it easy to swap out neural network controllers or physics solvers.

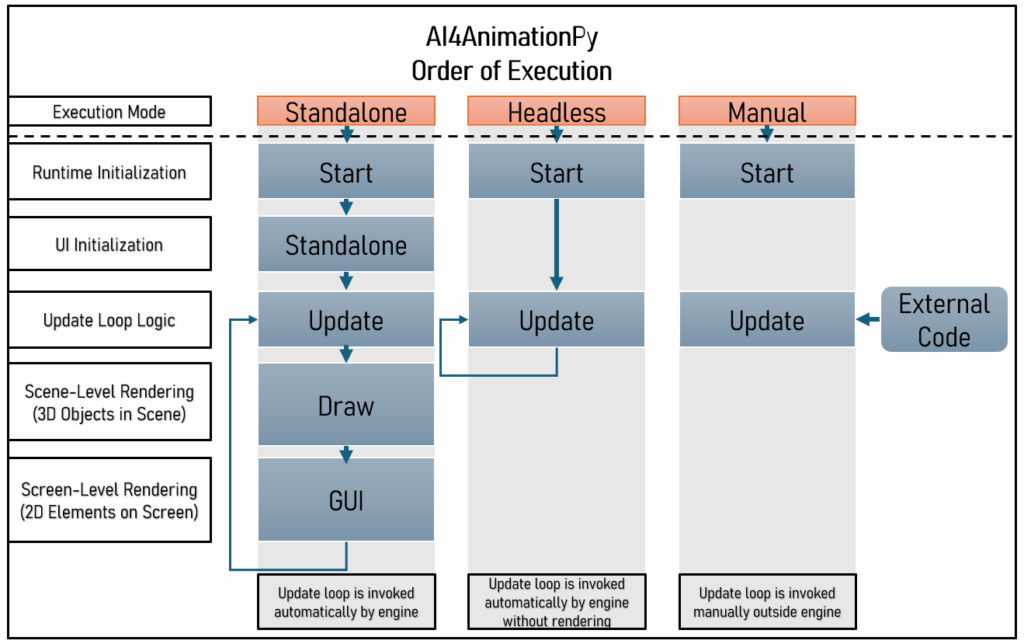

- Unified Update Loops: It features game-engine-style callbacks like

Update,Draw, andGUI, which allow for real-time visualization of a character’s “brain” (the neural network) while it executes motion. - Vectorized Math Library: A custom library handles complex 3D transformations—such as quaternions, forward kinematics (FK), and axis-angle rotations—optimized for NumPy and PyTorch.

Key Capabilities

AI4AnimationPy is built for high-fidelity character control, moving beyond simple video generation to interactive, physics-aware motion.

- Real-time Inverse Kinematics (IK): Features built-in solvers (like FABRIK) to ensure feet plant correctly on uneven terrain or hands reach precisely for objects.

- Neural Network Integration: Native support for Multi-Layer Perceptrons (MLP), Autoencoders, and Codebook Matching. Because it runs on PyTorch, users can perform backpropagation through the inference loop, a feat nearly impossible in traditional game engines.

- Motion Capture Processing: The framework supports

.npz,.fbx, and.bvhformats, allowing users to import raw motion data and visualize joint trajectories or contact points instantly. - Built-in Renderer: It includes a standalone rendering pipeline capable of deferred shading, SSAO, bloom, and shadow mapping, providing high-quality visual feedback without external software.

Why It Matters

Historically, research in AI animation was fragmented: models were trained in Python/PyTorch, but their results had to be exported to Unity via ONNX or data streaming to be visualized. This “bottleneck” often took hours of setup.

AI4AnimationPy slashes this overhead. Generating training data that once took hours in Unity can now be done in minutes. By providing a Headless Mode for server-side training and a Standalone Mode for interactive demos, it serves as a complete laboratory for the next generation of digital humans and robotic simulations.

Technical Note: AI4AnimationPy requires Python 3.12+ and is heavily optimized for NVIDIA GPUs using CUDA, ensuring that even complex skinned mesh rendering remains fluid during training.